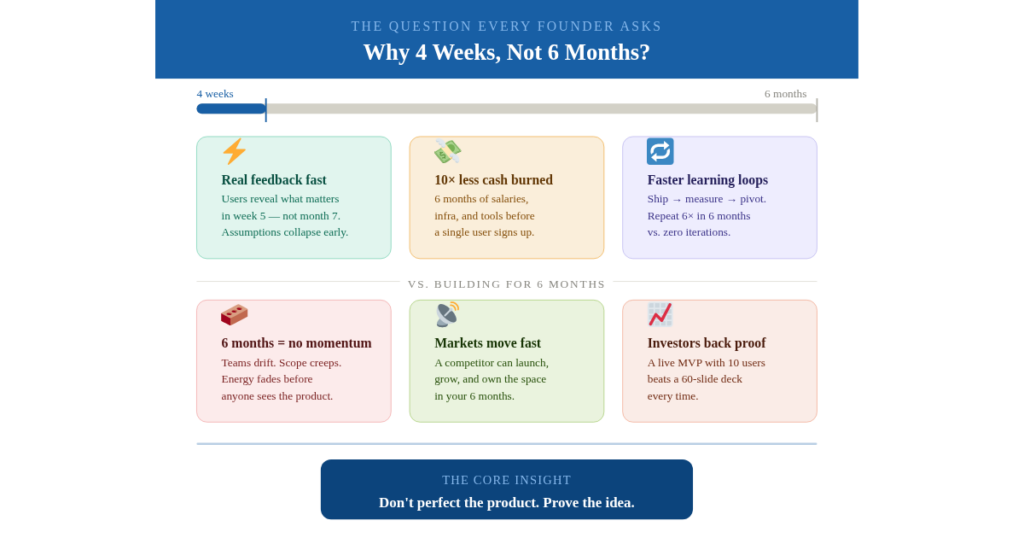

Building in 6 months means spending big before knowing if anyone wants your product. A 4-week MVP gets real users testing real software fast – cutting costs, exposing flawed assumptions early, and letting you pivot before it’s expensive. Speed isn’t the goal; smart validation is.

The 6-Month Trap Nobody Talks About

Here’s a scenario that plays out every day in the startup world.

A founder has a strong idea. They raise a seed round, hire a small dev team, and start building. Six months later, they finally launch – only to discover that the problem they were solving has already been addressed by a competitor, the target users don’t actually behave the way they assumed, or the core feature nobody said they wanted turns out to be the only one anyone actually uses.

Six months of work. Hundreds of thousands of dollars. And the market gave feedback in three weeks of real usage that internal meetings never could.

This is not an edge case. According to CB Insights, 42% of startups fail because there’s no market need for what they built. Most of those teams spent months building in the dark before ever finding out.

The solution isn’t to build faster for the sake of moving fast. It’s to validate faster – to get a working product into real users’ hands before your assumptions harden into expensive mistakes. That’s exactly what building an MVP in 4 weeks forces you to do.

What a “Working MVP” Actually Means

Before going further, let’s kill a common misconception.

A working MVP is not a prototype. It’s not a Figma mockup. It’s not a landing page with a waitlist. A working MVP is a functional, deployable product that solves one core problem for a defined user segment – well enough that they would use it again, recommend it, or pay for it.

The “minimum” in Minimum Viable Product refers to scope, not quality. It means you’ve stripped the product to its essential value, not that you’ve shipped something buggy and half-baked.

A 4-week MVP should be:

- Functional – Core workflows actually work end-to-end

- Deployable – Live on a real URL or app store, not localhost

- Usable – Clean enough UX that a user can navigate without hand-holding

- Measurable – Has basic instrumentation to track usage and behavior

If it doesn’t meet that bar, it’s a demo. Demos are useful, but they don’t give you a real market signal.

The 4-Week MVP vs. 6-Month Build: A Direct Comparison

| Factor | 4-Week MVP | 6-Month Build |

| Time to first user feedback | 4 weeks | 6+ months |

| Development cost | $8K–$25K (fixed price range) | $80K–$300K+ |

| Scope risk | Low — forced to prioritize | High — scope creep is inevitable |

| Market assumptions validated | Before investing deeply | After spending fully |

| Iteration cycles before launch | 0 (iteration starts post-launch) | Multiple internal cycles on unvalidated features |

| Pivot cost | Low — minimal sunk cost | Very high — team, codebase, and stakeholder inertia |

| Team focus | Extremely high — one goal | Diffused — meetings, planning, refinements |

The numbers tell a clear story. The 6-month build isn’t just slower — it’s structurally riskier. Every week you spend building before you’ve spoken to real users is a week you’re operating on assumptions.

Why 4 Weeks Works: The Psychology and the Science

Parkinson’s Law Is Working Against You

Parkinson’s Law states that work expands to fill the time available for its completion. Give a team 6 months to build an MVP, and they will find 6 months’ worth of work to do – whether or not that work is essential to the core product.

A 4-week deadline eliminates that dynamic entirely. There’s no room for “nice to have” features, endless design debates, or internal alignment meetings that stretch across three weeks. The constraint becomes a forcing function for focus.

The Lean Startup Data Still Holds

Eric Ries popularized the Build-Measure-Learn loop in The Lean Startup, and the core insight remains as relevant today as it was in 2011: the faster you complete a learning loop, the faster you move toward product-market fit.

A 6-month build is a single, enormous Build phase with no Measure or learn steps until the end. A 4-week MVP compresses the entire loop into one month, setting you up to Measure and Learn in weeks 5 through 8, then iterate and rebuild smarter from week 9 onward.

Investor Behavior Confirms It

YCombinator – arguably the most data-rich startup program in existence – consistently advises founders to launch before they’re ready. Their reasoning: the discomfort of an imperfect launch teaches more than months of internal preparation. Founders who ship in weeks, not months, consistently raise follow-on rounds faster because they have evidence, not projections.

The 4-Week MVP Build Process: How It Actually Works

Launching an MVP in 4 weeks isn’t chaos – it’s an extremely disciplined, phase-gated process. Here’s the framework used by high-velocity engineering teams:

Week 1: Discovery and Ruthless Scope Definition

The first week is not about writing code. It’s about making irreversible decisions correctly.

Days 1–2: Problem and User Mapping

- Define the single user persona the MVP serves

- Map the exact problem being solved (not a category of problems – a specific, observable problem)

- Define the “aha moment” – the single action that delivers core value

Days 3–4: Feature Triage

- List every feature the product could have

- Apply a three-tier filter: Must-Have (MVP dies without it), Nice-to-Have (V2 candidate), Distraction (cut completely)

- Commit to Must-Haves only. This is often the hardest part.

Day 5: Technical Architecture Decision

- Choose the stack that ships fastest, not the stack that’s most impressive

- Decide on third-party services vs. custom builds (payments: Stripe, not custom; auth: Auth0 or Clerk, not custom; etc.)

- Define the data model and core API contracts

Output: A one-page product brief, a wireframe of core flows, and a technical spec doc. Nothing else.

Week 2: Core Build – The Engine

Week 2 is heads-down engineering. No new feature requests. No design rethinks.

Focus areas:

- Backend: Core data models, primary API endpoints, authentication

- Frontend: Core user journey – only the screens that touch the primary workflow

- Integrations: Any third-party tools that are load-bearing (payments, notifications, storage)

Key rule: If a feature doesn’t appear in the core user journey mapped in Week 1, it does not get built in Week 2.

Engineering practice: Use proven component libraries (Shadcn, Tailwind, Radix) rather than building UI from scratch. The goal is a working product, not a custom design system.

Week 3: Complete Flows and Internal QA

The product needs to work end-to-end, not just feature-by-feature.

Focus areas:

- Connect all screens into complete user journeys

- Edge case handling for the most common failure paths

- Instrumentation: Add basic analytics (Mixpanel, PostHog, or Amplitude) to track core actions

- Internal QA: Every team member uses the product as if they’re the target user

What to ignore: Performance optimization, SEO, accessibility for edge cases, mobile responsiveness beyond core breakpoints (unless mobile is the primary surface).

Output: A product that a non-technical person can use without assistance, from sign-up to core value delivery.

Week 4: Polish, Deploy, and Soft Launch

Last week was about getting to production and into real hands.

Focus areas:

- Bug fixes from internal QA

- Deployment infrastructure (Vercel, Railway, or AWS – whichever is fastest to configure)

- Onboarding flow: The first 3 minutes of user experience must be frictionless

- Soft launch: 10–50 real users from your target segment (not friends and family)

Critical: Start collecting feedback on Day 28, not Day 30. The goal of the 4-week MVP is not “build finished.” It’s “users are using it, and we’re watching.”

AI Velocity Pods: The New Unfair Advantage

One of the most significant shifts in 2024–2025 is that the cost and speed of 4-week MVP development have dropped dramatically because of how AI-powered engineering teams operate.

AI Velocity Pods are small, specialized teams (typically 2–4 people) that use AI tools — Cursor, GitHub Copilot, Claude for code generation, Lovable, and similar tools — as a core part of the development workflow. Not as a gimmick, but as an integrated productivity layer.

What this changes in practice:

- Boilerplate code that used to take a developer a day to write now takes hours

- API integrations are scaffolded in minutes, not days

- Test coverage is generated alongside code, not after

- Documentation is auto-generated, freeing engineers to focus on logic

An AI Velocity Pod building a SaaS product in 2025 can produce in 4 weeks what a traditional team of 4–5 engineers would have needed 10–12 weeks to build in 2022. That’s not an exaggeration – it’s a structural shift in what’s achievable.

This is why MVP in 4 weeks at a fixed price has become a credible, deliverable offer from specialized agencies and venture studios. The economics work in ways they simply didn’t three years ago.

Real-World Scenarios: What Gets Built in 4 Weeks

Scenario 1: SaaS Analytics Dashboard

The ask: A B2B founder wants to validate whether mid-market e-commerce brands will pay for automated inventory forecasting.

The 4-week MVP:

- User authentication and onboarding

- CSV import for inventory and sales data

- A forecasting engine (using a pre-trained model, not custom ML)

- A dashboard showing forecast vs. actual with a simple alert system

- A Stripe-gated trial flow

The result: 12 beta users in week 5. By week 8, 4 of them converted to paid plans at $299/month. The founder had a PMF signal before spending a dollar on marketing.

Scenario 2: AI-Powered Legal Document Tool

The ask: A legal-tech startup wants to build a tool that helps small law firms draft standard contracts faster.

The 4-week MVP:

- A template library of 15 common contract types

- An AI-assisted clause editor (GPT-4 API for suggestions)

- Export to PDF and DOCX

- Simple team sharing via link

Cut for V2: Custom template builder, client portal, e-signature integration, and billing by document.

The result: The team discovered in week 6 that users didn’t care about clause suggestions — they cared about the template library. That insight completely redirected the product roadmap. Without the MVP, they would have spent 3 more months building the AI layer no one wanted.

Scenario 3: Marketplace MVP

The ask: A two-sided marketplace connecting freelance designers with DTC brands for UGC content.

The 4-week MVP:

- Designer profile creation and portfolio upload

- Brand brief submission form

- Manual matching (founder matches manually – no algorithm yet)

- Stripe Connect for payment escrow

- Basic messaging

Cut for V2: Algorithmic matching, reviews and ratings, advanced search, analytics for brands.

The result: 20 transactions in the first month. Real revenue. Real user behavior data. And a clear picture of which side of the marketplace was harder to supply – information that would have taken months to surface through internal planning.

The Hidden Costs of Waiting 6 Months

The financial cost of a 6-month build is obvious. The hidden costs are less discussed:

1. Opportunity Cost The market doesn’t pause while you build. A competitor who launched 3 months before you has 3 months of user data, SEO authority, and customer relationships you don’t.

2. Team Entropy Long build cycles without user feedback breed internal disagreement. “Should we add X feature?” becomes a political question instead of an empirical one. Morale drops when there’s no external validation.

3. Assumption Calcification The longer you build before getting feedback, the more emotionally and financially invested your team is in the decisions you’ve made. Pivoting after 6 months is enormously harder than pivoting after 4 weeks – not because the technical debt is worse (though it often is), but because the human cost of admitting a wrong assumption is higher.

4. Investor Patience If you raised pre-product money, your investors expect to see traction signals within 3–6 months. A 6-month build that launches at month 6 gives you zero time to show traction before your next conversation. A 4-week MVP gives you 4+ months of traction data within the same window.

Common Objections

Our product is too complex to build in 4 weeks.

In almost every case, this reflects scope confusion, not genuine complexity. The question is never “can we build the whole product in 4 weeks?” It’s “what is the smallest version of the product that creates real value for one user type?” That version is almost always buildable in 4 weeks.

If you genuinely cannot define that version, that’s a product strategy problem, not a timeline problem.

We’ll accumulate too much technical debt.

Technical debt is a real concern. But it’s manageable debt – debt you can pay down when you have revenue and proof that the product is worth investing in. The alternative is spending 6 months building a clean, well-architected system for a product nobody wants. That’s not debt. That’s a total loss.

Our industry requires compliance and security from day one.

Legitimate in specific industries – healthcare, fintech, defense. But even in regulated industries, the discovery and wireframing phase, early user interviews, and compliance-scoped prototypes can be compressed dramatically. The 4-week framework adapts; it doesn’t disappear.

We need to get it right before we show anyone.

This is the most dangerous objection of all. “Right” is defined by users, not by your internal standards. Showing a good-enough product to 20 users is how you find out what “right” actually means. Building in isolation for 6 months and then showing it is how you find out you were wrong – after it’s too late to course-correct cheaply.

The 4-Week MVP Guarantee: What to Look for in a Build Partner

If you’re working with an external team or agency to build your MVP in 4 weeks, the quality of that engagement depends on a few non-negotiable factors:

1. Fixed Scope, Fixed Timeline, Fixed Price. Any partner offering a 4-Week MVP Guarantee should be able to commit to all three. If the scope is defined clearly in Week 1, there is no reason the timeline or price should move. Beware of teams that give fixed timelines but variable prices.

2. A Discovery Week That’s Treated as Seriously as the Build Weeks. The Week 1 process described above is not administrative overhead – it’s the most important week of the project. Partners who skip or rush discovery almost always deliver the wrong thing on time.

3. AI-Augmented Development Capabilities In 2025, a team that isn’t using AI tools in its development workflow is working at a structural disadvantage. Ask directly: what AI tools does your team use, and how do they integrate with your delivery process?

4. Post-Launch Continuity The MVP launch is not the end of the engagement – it’s the beginning of the learning phase. The best build partners have a clear plan for what happens in weeks 5–8, including analytics review, user interview facilitation, and iteration prioritization.

Key Takeaways

If you take nothing else from this piece, take these:

- The primary purpose of an MVP is learning, not launching. Speed matters because faster learning means smarter decisions – not because shipping fast is inherently virtuous.

- A 6-month build is a single, enormous bet. A 4-week MVP is the first in a series of smaller, cheaper bets. The expected value of the second approach is almost always higher.

- Scope discipline is the hard part. The technical execution of a 4-week MVP is straightforward with the right team. The hard part is committing to what you will not build.

- AI Velocity Pods have changed the economics. What cost $200K and 5 months in 2021 now costs $20K and 4 weeks for teams using AI-augmented workflows. The playing field has shifted dramatically.

- Your assumptions about what users want are probably wrong. Not partially wrong – significantly wrong in at least one important dimension. The only way to find out which dimension is to ship and watch.

- Build an MVP in 4 weeks, then earn the right to build more. Every feature you add after launch should be justified by user behavior, not founder intuition. The 4-week MVP is how you start collecting that justification.

Conclusion: The Market Doesn’t Wait for Perfect

The founders who win in the early stages are rarely the ones who built the best product in secrecy. They’re the ones who got to market feedback fastest, updated their understanding soonest, and compounded their learning cycles while their competitors were still in planning meetings.

Building a working MVP in 4 weeks is not a shortcut – it’s the most disciplined thing an early-stage team can do. It forces clarity, rewards focus, eliminates assumptions, and puts you in front of the only feedback that actually matters: real users, using your real product, in their real context.